Legacy COBOL code is largely used in critical systems like those of banks and airlines. What could go wrong with having that code rewritten by stochastic parrots who get programming answers wrong half of the time?

LLMs produce code that is functionally error prone while looking reasonable (in the same way that it produces answers that are grammatically correct, correctly spelled, but factually incorrect).

As we all know, fixing bugs in someone else’s code is generally more difficult than writing the code correctly in the 1st place , and that’s going to apply to a LLMs code output just as much as a humans, if not more.

That’s assuming they’re using one of the generic models like ChatGPT and not something custom they’ve created specifically to do this.

Edit: they are in fact using their own as per the article

I’m aware they’re not using a generic model, but that’s not much better. Current custom-made models still fuck up significantly more than humans, and in less predictable ways.

Even if their custom model is slightly incorrect 1% of the time, that’s still a major problem in critical systems like those.

Which models are those?

It’s good to see that they aren’t just piping through GPT-whatever, but in my experience, the vast majority of people who tout AI, even at large corporations, generally have zero idea how it works. I’m still not convinced that this is a good idea.

I mostly use A.I. to translate. ChatGPT gets that done it gets it done pretty good, especially when you say “translate this mandarin text into English. I don’t care if it is somewhat inaccurate, just do it as best as you can.“

The AI would likely be trained or fine tuned specifically for COBOL. In these very narrow use cases AI can find some things that humans can miss.

Google did this recently on a sorting algorithm and was able to speed it up by 70%: More info here

It’s cool for small and easily testable functions like sorting, but to refactor large amounts of code? No thanks. Would be great if it could leave comments on my pull request though.

Try PR-Codex

I thought it would leave comments on individual lines of code with feedback and code quality, but seems like it just summarizes what the pull request changes

the summary stuff would be better if it was per file instead of overall

Hm don’t think I can help you with that unfortunately.

It’s nice for quickly seeing what a PR is about, not much more

I suppose I shouldn’t be surprised at the negative response here, but personally this seems like the perfect application of LLMs. Yeah, it’ll need to be verified by humans, but so would human-translated code. Using an appropriately trained LLM to do the first pass translation has the potential to eliminate a lot of toil.

From the forum: “If I know IBM at all, behind the scenes it’ll end up being a bunch of junior programmers doing the work, after the AI branded tech fails. It’ll still be called Watson tho…” https://arstechnica.com/civis/threads/ibm’s-generative-ai-tool-aims-to-refactor-ancient-cobol-code-for-its-mainframes.1495343/post-42133422

One reason I’ve seen for past efforts like this to fail is that COBOL uses fixed-point decimals instead of floating point. As a result, the decimal math functions are largely integer based and blazingly fast without losing precision.

When we translate COBOL to modern languages though, we end up either needing to use floats (which famously lose precision) or reimplement fixed point math in the new language, which often ends up significantly slower than the COBOL code. And when you’re streaming millions of financial transactions/sec you really need both.

I’m hopeful that somebody will crack that nut and free the finance sector from the 1970’s… But there’s more than just the usual challenges of a major rewrite.

If you target the same hardware in the end, why wouldn’t it be possible to have another language implement the same arithmetics with the same performance? I’m sure modern languages’ metaprogramming could enable that with a syntax that even sucks less…

Well, partially because in some cases it isn’t the same hardware! There are mainframe machines built to run COBOL programs efficiently, like IBMs Z Machines. In those scenarios, you’d likely have something like a standard Linux server as your API front-end forwarding requests to the COBOL machine.

And what makes them differ? Well, the CPU has dedicated instructions for certain fixed point operations. For a given request it’s only going to be a few ns faster, but when the vast majority of your workload is performing those actions, it adds up quick.

Another issue is rounding error. With Fixed Point numbers, you still have to round off partial results and the rules for rounding are surprisingly complex. So when you port from COBOL to Java, you need to ensure you port the rounding rules too, or you’ll get different results when you rerun last month’s reports. No bueno.

Anyway, all this is not to say that COBOL is better or worse than any other language, just that its primitives differ in behavior from other languages in important ways that can make it hard to migrate.

This is the best summary I could come up with:

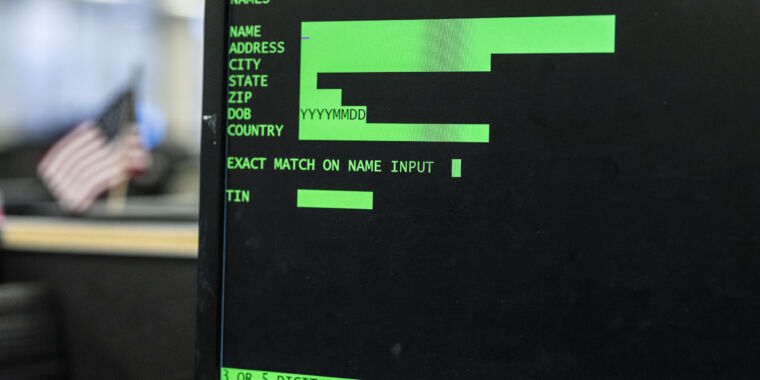

IBM, eager to keep those legacy functions on its Z mainframe systems, wants that code rewritten in Java.

In a technical blog post specific to COBOL conversion, IBM’s Kyle Charlet, CTO for zSystems software, steps up to the plate and says what a lot of people have said about COBOL: It’s not just the code; it’s the business logic, the edge-cases, and the institutional memory, or the lack thereof.

IBM’s watsonx, Charlet writes, could help large organizations decouple individual services from monolithic COBOL apps.

While COBOL codebases can be relatively stable and secure—once found to be among the least problematic in a broad survey—the costs of updating and extending them are gigantic.

Legacy COBOL was one of the reasons the Office of Personnel Management suffered a deeply intrusive break-in in 2015, as the antiquated code could not be encrypted or made to work with other secure systems.

But there’s a recurring argument that COBOL is good at managing business-specific systems and exchanges in ways that (some might argue) present fewer attack vectors.

The original article contains 458 words, the summary contains 172 words. Saved 62%. I’m a bot and I’m open source!

deleted by creator

I know 2 people who wrote COBOL code for some major healthcare systems a long time ago and they came out of retirement about 5 years ago to update/convert that code. They made six figure salaries working from home to update that code.

deleted by creator